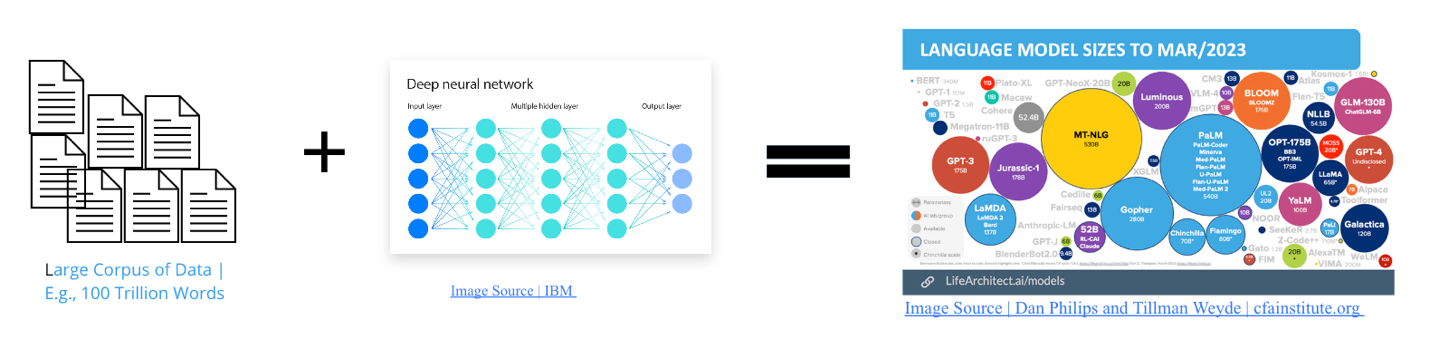

Large Language models (LLMs) are multi-layered neural network or deep learning model pretrained with large corpus of data (IBM.com). Typically, very large text corpus (e.g., 100 trillion words) are sources from the internet, wikis, journals, etc., see Figure 1. A typical LLM consist of trillions of internal variables popularly known as parameters. These variables or parameters help the model to learn patterns, context, and perform a variety of natural language processing (NLP) tasks such as text generation, language translations, sentiment analysis, etc. LLMs are trained with transformer architecture to address the inherent limitations of recurrent neural network. Detailed explanation of how the transformer architecture works is beyond the scope of this article. However, it is important to note a typical transformer which include the following computational techniques: embeddings, attention, and encoder. These mechanisms or techniques in the transformer architecture give LLM the ability to generate coherent and contextually relevant text, answer questions, summarize information, translate languages, and even create content like poems or code, based on the prompts they receive.

2.LLM, LLM Software, Chatbot and Generative AI

There is often confusion between Large Language Models (LLMs) and LLM software. For instance, many people refer to ChatGPT as an LLM, which can be misleading. It may be helpful to understand the distinctions between them and other related concepts such as Generative AI and Chatbot. This may help to clarify how each technology plays a unique role in the broader AI ecosystem, enhancing user experiences across various applications.

As mentioned earlier, LLM are deep neural network models built on transformer architecture to understand, generate, and manipulate human language. Some examples include OpenAI’s GPT-4o and ites previous versions, BERT, T5, and XLNet, LLaMa 3 and its previous versions, etc. These models are trained on extensive text corpora to learn patterns and context, enabling them to perform a variety of NLP tasks (AI Info) (MIT News). On the other hand, LLM software refers to applications or tools that leverage these underlying LLMs to provide specific functionalities to end-users. Examples include ChatGPT, Gemini, and Copilot. These software applications use the capabilities of LLMs to create interactive and practical tools. For instance, ChatGPT is powered by GPT-4, and Meta AI utilizes LLaMa 3 as its underlying LLM (MIT News).

Generative AI encompasses systems that create new content, such as text, images, or music, based on learned patterns from training data. This includes techniques like Generative Adversarial Networks (GANs) and diffusion models. While LLMs are a subset of generative AI focusing on text, generative AI spans a broader range of outputs, including visual and audio content. These systems can produce realistic images, generate creative writing, and even design new materials (AI Info) (MIT News).

Chatbots are AI applications designed to simulate human conversation. They can range from simple rule-based systems to advanced models powered by LLMs. Modern chatbots, such as ChatGPT, use LLMs to understand and generate responses in natural language, making interactions more fluid and human-like. They are widely used in customer service, virtual assistants, and interactive interfaces (MIT News).

- Use Cases of LLM in UX Research

Large Language Models (LLM) applications have a wide variety of use cases in UX Research and Design. Popular use cases include sentiment analysis, topic modeling, creating usability testing scenario, etc. Although general purpose LLM applications such as ChatGPT, Gemini, and Copilot have assisted UX Researchers in designed, planning, and executing research, vendors are now pretraining and optimizing LLM to perform specific tasks. In this short article, I briefly discussed the various use cases of LLM applications in UX Research, then identified 30 populations LLM applications, and categorized them according to their use cases. Below is the breakdown of specific use cases for Generative AI and Large Language Models in the context of UX Research:

- Augmenting User Research

- Persona Generation:LLMs can create comprehensive user personas with detailed backgrounds, goals, and pain points. This can help researchers gain richer user insights, even on a limited budget.

- Synthetic Interview/Survey Data:Generative AI can simulate user interviews or survey responses. While not a substitute for real interaction, this is useful for quick idea validation or early-stage exploration.

- Usability Test Scenario Creation:LLMs can help craft diverse and realistic scenarios for usability testing, ensuring wider coverage and more robust product evaluation.

- Analyzing and Summarizing User Feedback

- Sentiment Analysis:LLMs excel at identifying sentiment in large volumes of textual feedback (e.g., open-ended survey responses, social media comments), helping researchers gauge user satisfaction and uncover areas for improvement.

- Topic Modeling:Generative AI can identify key themes and recurring topics within user feedback, organizing complex data into actionable insights.

- Summarization:LLMs can provide summaries of lengthy research transcripts, focus group discussions, or extensive user reviews. This saves researchers time and helps isolate the most important points.

- Content Creation for UX Research

- Mockup/Prototype Generation:Generative AI tools can create low-fidelity wireframes or prototypes based on simple text descriptions. This speeds up the early design process for rapid iteration.

- Copywriting for Surveys/Questionnaires:LLMs assist in crafting clear, unbiased, and engaging questions, improving the quality and response rate of user surveys.

- Creating Stimuli:Generative AI can produce images, videos, or other stimuli for use in UX research activities like A/B testing or emotional response studies.

- Enhancing Accessibility

- Translating User Feedback:LLMs can help translate feedback provided in different languages, facilitating research involving a global user base.

- Converting Text to Speech:Generative AI can convert written materials into audio formats, making research more accessible to users with visual impairments.

- Alternative Text Descriptions:AI tools can create descriptions for images, providing contextual information for visually impaired users during testing.

- Important Considerations

- Human-in-the-Loop:Emphasize the use of generative AI as an augmentation tool, not a replacement for human researchers. Expert judgment is vital.

- Transparency:Clearly disclose the use of generative AI models to research participants.

- Bias:Continuously monitor and mitigate biases in datasets and models to prevent unfair outputs that impact UX.